· 11 min read

Flatten Array Interview Problem

What if you got this interview question where you had to flatten an array? How would you do it?

In this post, I’ll cover 3 approaches to solving the problem for flattening an array, which is a common problem that can help get you started into the world of recursion and iteration as you move forward with more difficult problems that you may get asked in interviews.

- a naive recursive approach

- an optimized recursive approach

- an iterative approach

We’ll cover trade offs, time and space complexity, and the underlying mechanics of how each version works.

Each one fixes a problem the previous one left behind.

Trees, Not Boxes: Seeing Arrays Differently

Arrays always seemed to baffle me, especially nested ones. I could never wrap my head around the structure and easily “understand” it.

I began to work with Git at some point and saw that branches are used to contain various code changes, and that visual representation made me think of a tree.

In reality though, arrays are like trees. In the flatten array problem, it’s important to see arrays and nested arrays as branches and leaves. In fact, in many problems looking at them in this way makes it generally much easier to see in your head.

As you recursively go into a tree, you’re collecting leaves.

- If I’m at a leaf? Collect it.

- If I’m at a branch? Go deeper.

While that isn’t the core point of this post, I wanted to mention it because it was a game changer for me in terms of how I visualize and understand nested arrays.

Recursive Strategy

We would normally start with a base function that takes in an array value, and build out a function that uses a finalArray inside.

When analyzing the approach of recursion, the ultimate goal is to set our function up so that it can call itself internally to ultimately produce our final results.

Since we’re recursively going through an array, we would use a for loop to iterate through the array and check each value. We can work with it based on its known length.

You may ask yourself, why the for loop, why not a while loop or forEach?

It’s about what you know ahead of time

for: “I know how many times to loop”, fixed size at each recursion level.while: “I don’t know how many times, just keep going until you’re done”, stack size is dynamic.

Remember this distinction. It’s going to come back later when we build the iterative version.

If we know that value[i] is an array, we can do something interesting. We call the function recursively on that nested array, and then spread the results into our finalArray. If it’s not an array, it’s a leaf, just collect it.

function recursiveFlattenArray(value) {

let finalArray = [];

for (let i = 0; i < value.length; i++) {

if (Array.isArray(value[i])) {

finalArray.push(...recursiveFlattenArray(value[i]));

} else {

finalArray.push(value[i]);

}

}

return finalArray;

}This works. Let’s trace through it with [1, [2, [3, [4]]]] to see what actually happens under the hood.

Flow of recursion

Branch 0: [1, [2, [3, [4]]]] creates its own finalArray, []

found leaf 1, collect it → [1]

found branch [2, [3, [4]]], go deeper...

Branch 1: [2, [3, [4]]] creates its own finalArray, []

found leaf 2, collect it → [2]

found branch [3, [4]], go deeper...

Branch 2: [3, [4]] creates its own finalArray, []

found leaf 3, collect it → [3]

found branch [4], go deeper...

Branch 3: [4] creates its own finalArray, []

found leaf 4, collect it → [4]

no more items, return [4]

spread [4] into Branch 2's finalArray → [3, 4]

return [3, 4]

spread [3, 4] into Branch 1's finalArray → [2, 3, 4]

return [2, 3, 4]

spread [2, 3, 4] into Branch 0's finalArray → [1, 2, 3, 4]

return [1, 2, 3, 4]As you can see, each box is a function call, and each box has its own finalArray that it uses to store the results of its processing.

When a box encounters a nested array, it calls itself to process that nested array, and once it gets the results back, it spreads those results into its own finalArray. This process continues until all boxes have processed their respective arrays and returned their results, ultimately leading to the final flattened array.

This version works perfectly fine. But there’s a cost hiding in plain sight.

Optimizing The Recursive Approach

Look at the trace again. Every single box creates its own finalArray. Every single spread copies elements that have already been copied before. The element 4 gets pushed into Box 3’s array, then spread into Box 2’s, then spread into Box 1’s, then spread into Box 0’s. That one element got touched four times.

Now imagine an input like [1, [2, [3, [4, [5, [6, [7, [8]]]]]]]]. The deepest element gets copied at every level on the way back up. In a deeply nested chain, each element at depth d gets touched d times.

That gives us a time complexity of O(n x d), where n is the total number of elements and d is the maximum nesting depth. For a pathologically deep array, that’s a real problem.

Each recursive call creates a new array and then spreads its contents back up. We’re doing redundant copying at every single level.

What if we didn’t create a new array each time?

One Array: The Accumulator Fix

Instead of each box creating its own finalArray and spreading results back up, what if we passed a single shared array down through every recursive call?

We can do this by adding a second parameter with a default value of []. The caller doesn’t need to pass it, it defaults on the first call, and from that point on, every recursive call shares the same reference.

function recursiveFlattenArray(value, results = []) {

for (let i = 0; i < value.length; i++) {

if (Array.isArray(value[i])) {

recursiveFlattenArray(value[i], results);

} else {

results.push(value[i]);

}

}

return results;

}No spread. No intermediate arrays. Just one results array that every level pushes directly into.

Let’s trace through the same input [1, [2, [3, [4]]]] again so you can compare:

Branch 0: [1, [2, [3, [4]]]] receives shared results []

found leaf 1, collect it → results is now [1]

found branch [2, [3, [4]]], go deeper with same results...

Branch 1: [2, [3, [4]]] receives shared results [1]

found leaf 2, collect it → results is now [1, 2]

found branch [3, [4]], go deeper with same results...

Branch 2: [3, [4]] receives shared results [1, 2]

found leaf 3, collect it → results is now [1, 2, 3]

found branch [4], go deeper with same results...

Branch 3: [4] receives shared results [1, 2, 3]

found leaf 4, collect it → results is now [1, 2, 3, 4]

no more items, return [1, 2, 3, 4]

return [1, 2, 3, 4]

return [1, 2, 3, 4]

return [1, 2, 3, 4]Every element gets touched exactly once. Each value is pushed directly into the shared array the moment we find it. No copying. No spreading. No intermediate arrays being created and discarded.

That gives us a time complexity of O(n), which is the best you can possibly do, because you have to at least look at every element once.

The accumulator fixes the time problem. But there’s still a problem that recursion itself can’t fix.

The Call Stack

In an interview, you may get asked about space complexity, as in, how much extra memory does each version use? To answer that, we think about what’s sitting in memory at the deepest point of recursion.

Both recursive versions, the naive spread version and the optimized version, use O(d) call stack frames, where d is the maximum nesting depth. Every recursive call adds a frame to the call stack, and those frames don’t get cleaned up until the deepest call returns and the stack starts unwinding.

The difference between the two is what else sits in memory alongside those frames:

- Spread version: Call frames + intermediate arrays at every level.

- Accumulator version: Call frames only.

The accumulator version is leaner, but both share the same fundamental limitation: they rely on the call stack, and the call stack has a hard size limit. Feed either version an array nested a few thousand levels deep and you’ll hit a stack overflow.

This is where the iterative approach becomes relevant. Not because it’s conceptually simpler, it’s actually a bit more work to think through, but because it moves the “memory of where I am” from the call stack to the heap. The heap is much larger. No stack overflow.

- The accumulator fixes the time problem.

- The iterative version fixes the crashing problem.

Ditching Recursion: The Pringles Can

When thinking about the iterative approach, we need to figure out what data structure can replace the call stack. We need something we can “come back to”, something that lets us set aside work for later while we handle what’s in front of us.

That means we need a stack: last in, first out (LIFO).

It’s worth noting that in JavaScript, an array and a stack are the same thing physically. They’re both just arrays. The difference is how you use them. A stack means you only ever interact with one end, the top. Push onto the top, pop from the top. Like a Pringles can: you can only reach the top chip.

If you were to accidentally grab too many chips from the top, you’d place the extra you grabbed back on the top of the chip stack within the can. Conceptually for me, this made the most sense when visualizing the process.

Remember earlier when I said while is for “I don’t know how many times, just keep going until you’re done”? This is that moment. Our stack size changes dynamically as we pop items off and push unwrapped contents back on. We don’t know up front how many iterations we’ll need.

Here’s the idea: take the input, put it on the stack. Pop the top item. If it’s a leaf, collect it. If it’s an array, unwrap it and push its contents back onto the stack. Keep going until the stack is empty.

Stack: | 1 | [2, [3, [4]]] | pop [2, [3, [4]]]

Stack: | 1 | 2 | [3, [4]] | unwrap, push contents

... keep going ...

Eventually:

Stack: | 1 | 2 | 3 | 4 | all leaves

Pop them off: 4, 3, 2, 1 collected in reverse orderThere’s one catch you might have already noticed. Because we’re using LIFO, we end up collecting elements in reverse order. We’ll deal with that in the next section.

function iterativeFlattenArray(value) {

let results = [];

let stack = [...value];

while (stack.length > 0) {

let item = stack.pop();

if (Array.isArray(item)) {

stack.push(...item);

} else {

results.push(item);

}

}

return results;

}We spread the initial value into a new array so we don’t mutate the original input. From there, it’s just a loop: pop, check, either collect or unwrap. Once the stack is empty, we reverse and return.

No recursion. No call stack growth. The stack lives on the heap, which means we’re not going to hit a stack overflow no matter how deep the nesting goes.

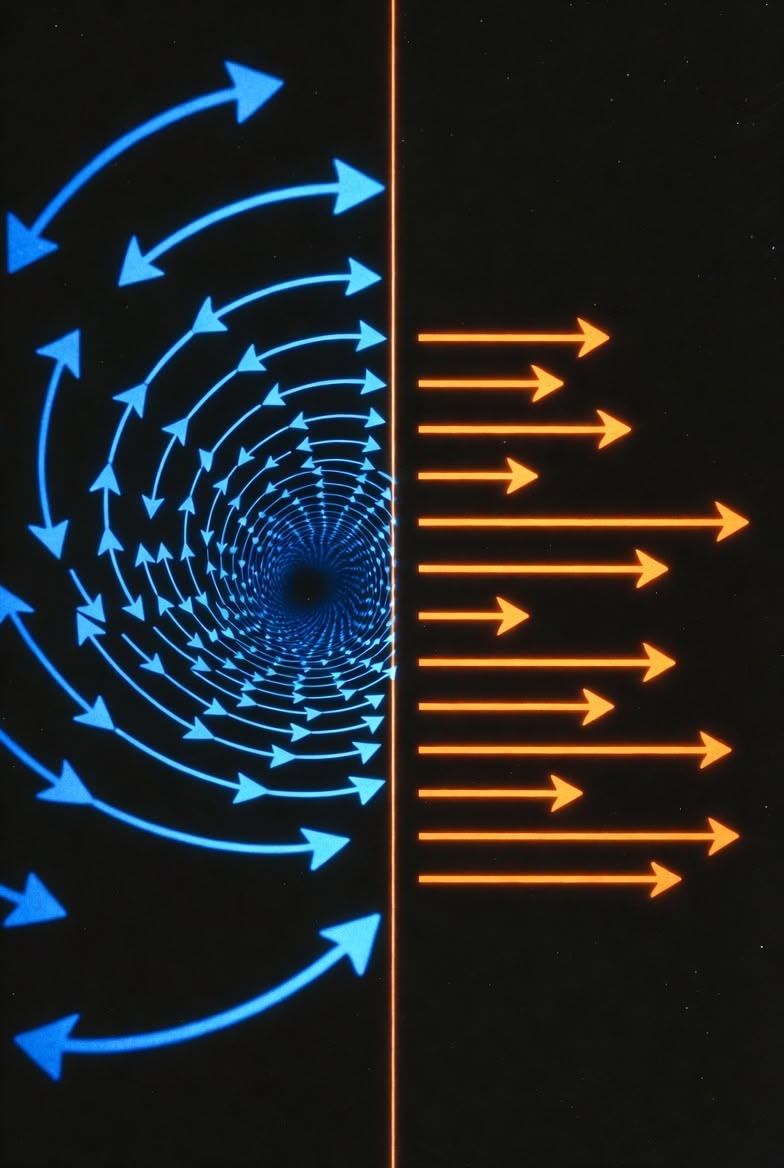

Approaching LIFO Order

Because we’re using a stack (LIFO), the natural collection order is reversed. There are a couple ways to handle this, and in an interview you might want to mention the tradeoffs.

Option 1: pop + results.push() + .reverse() at the end

This is what we used above. pop is O(1), push is O(1), and .reverse() is a single O(n) pass at the very end. Total cost: O(n).

Option 2: pop + results.unshift() during iteration

Instead of reversing at the end, you insert each element at the front of the results array as you go. This avoids the reverse step, but unshift is O(n) per insertion because it shifts every existing element over. Total cost: O(n²).

Option 3: shift + results.push()

Instead of popping from the top, you shift from the front (making it a queue/FIFO approach). This gives you the correct order naturally, no reverse needed. But shift is O(n) per call for the same reason as unshift. Total cost: O(n²).

| Strategy | Collection Cost | Order Fix | Total |

|---|---|---|---|

pop + push + .reverse() | O(1) per element | One O(n) pass at end | O(n) |

pop + unshift | O(n) per element | Not needed | O(n²) |

shift + push | O(n) per element | Not needed | O(n²) |

The first option wins. The reverse at the end is a small price to pay compared to doing O(n) work on every single element during collection.

Complexity Overview

| Naive Recursive (spread) | Recursive (accumulator) | Iterative (stack) | |

|---|---|---|---|

| Time Complexity | O(n x d) | O(n) | O(n) |

| Space Complexity | O(d) frames + intermediate arrays | O(d) frames only | O(n) on heap |

| Memory Location | Call stack | Call stack | Heap |

| Stack Overflow Risk | Yes | Yes | No |

Each version solves a real problem

- The naive recursive version gets us a working solution and a clear mental model.

- The accumulator version fixes the hidden time cost by eliminating redundant copying.

- The iterative version fixes the crash risk by moving the work off the call stack entirely.

In an interview, showing that you can start with the simplest version and then articulate why and how you’d improve it is often more valuable than jumping straight to the optimal solution. The journey through these three versions demonstrates that you understand the tradeoffs, not just the syntax.

Final Code

function iterativeFlattenArray(value) {

let results = [];

let stack = [...value];

while (stack.length > 0) {

let item = stack.pop();

if (Array.isArray(item)) {

stack.push(...item);

} else {

results.push(item);

}

}

return results.reverse();

}